In 90 days, a mid-sized financial services company went from automating 12% of routine operations to 67% — saving 2,847 hours of human labor per quarter and recovering $412,000 in annual operational costs.

The Challenge

By Q4 2025, the company’s back-office operations were drowning in repetitive work.

Customer service teams spent 4+ hours daily processing routine requests: account verifications, balance inquiries, document uploads, policy exception handling. Loan officers spent 6 hours per day on intake paperwork alone.

The company employed 156 operations staff just to handle predictable, rule-based tasks that followed documented workflows.

The real problem wasn’t just lost time—it was lost scaling velocity. Every 15% revenue growth meant hiring 20+ new staff members just to handle the same percentage of manual work.

Traditional RPA (robotic process automation) had been deployed in 2023 with disappointing results. The system handled only rigid, single-flow tasks.

It broke every time an edge case appeared. Each modification required custom coding and a 4-week development cycle.

By 2025, they’d invested $340,000 in RPA infrastructure that was covering only 12% of operational volume.

In late 2024, leadership had reviewed three options: hire more staff (unsustainable), rebuild processes (2-year project), or pilot agentic AI systems (unproven but promising).

They chose agentic AI.

The Strategy

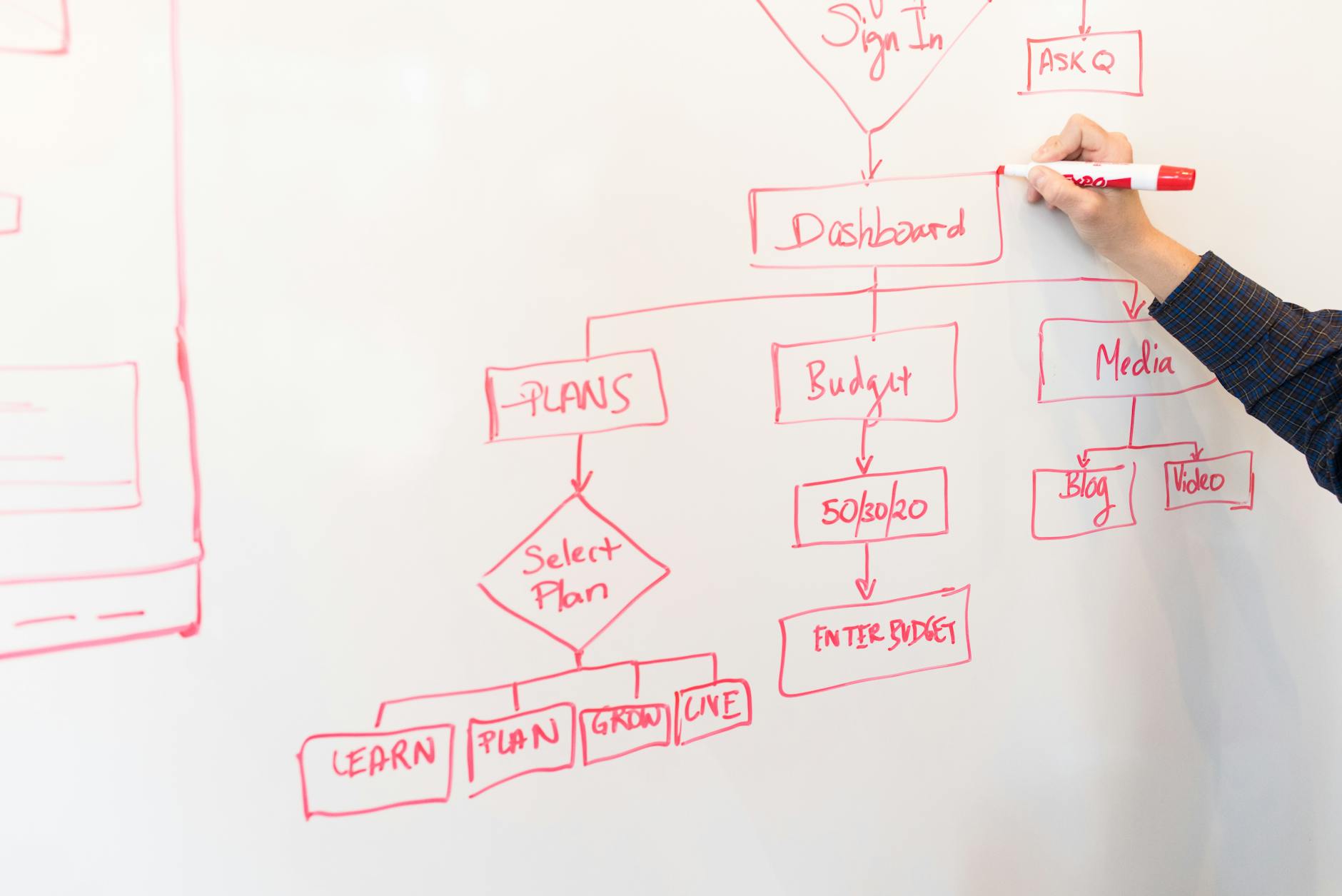

Phase 1: Pilot and Foundation Building (Weeks 1-4)

The team selected Claude API and custom Python orchestration for their agentic framework, deliberately avoiding monolithic enterprise AI platforms that required months of integration work.

They started with the highest-volume, lowest-risk process: account verification requests. This process had a defined playbook: confirm customer identity, review submitted documents, check compliance flags, send approval/rejection.

It was exactly 47 sequential steps in the current workflow. A human agent could complete it in 8-12 minutes.

Week 1 involved building the prompt framework and defining agent guardrails. The team used Anthropic’s constitutional AI principles to set behavioral boundaries: the agent must ask for human escalation if confidence dropped below 87%, must never make assumptions about identity without three verification sources, must flag any document anomalies for human review.

They loaded 18 months of historical account verification data—5,847 completed cases—into a vector database using Pinecone. This allowed the agent to retrieve similar historical cases and reasoning patterns during live decisions.

Week 2 was testing against the company’s transaction database. They ran 200 synthetic account verification requests and compared agent outputs against what human agents had approved/rejected.

Accuracy hit 91% on first attempt, but edge cases showed the agent was too conservative—it escalated decisions that experienced humans would approve outright.

Weeks 3-4 involved tuning the agent’s decision thresholds. They discovered that human account verifiers had an implicit decision framework the team hadn’t documented: if the applicant had over 7 years of account history with no red flags, document verification could be lighter.

The agent hadn’t learned this pattern from the data alone.

They added this rule as a constraint in the prompt: “If customer tenure exceeds 7 years with zero compliance flags in history, document verification threshold drops from ‘three independent sources’ to ‘two sources plus one behavioral signal.'”

By week 4, accuracy on the 200-case validation set hit 94%, with only 12 escalations—all legitimate edge cases that human agents confirmed were correct escalations.

Phase 2: Scaling to New Processes (Weeks 5-8)

With account verification validated, they replicated the framework across four new processes in parallel.

Process stack for Phase 2:

- Loan document intake: automated document classification, missing field detection, and initial compliance screening

- Customer service routing: intake form processing, issue categorization, tier assignment (escalate vs. frontline)

- Policy exception requests: rule evaluation, precedent lookup, risk assessment

- Payable reconciliation: invoice matching, discrepancy flagging, exception categorization

Here’s where the compound effect started. Each new process took progressively less time to deploy because the team had built reusable agent templates.

Loan document intake launched in week 5. The process had 34 steps: extract document metadata, classify document type, scan for required fields, check completeness, detect anomalies, route to appropriate team.

Rather than training from scratch, they used the account verification agent as a foundation and swapped in loan-specific rules and retrieval data. The loan process had 12 years of historical document classification data—89,400 records.

Week 5 setup + testing took 6 days instead of the 14 days account verification had required.

Accuracy on loan intake launched at 89% without extensive tuning. By week 7, after data refinement, it reached 93%.

Customer service routing came next (week 6 launch). This was more complex—the agent needed to parse free-form customer language and map it to internal service categories.

They prompted the agent to use a three-step approach: clarify intent through extracted keywords, reference historical tickets for pattern matching, assign a tier (Level 1 frontline handling vs. escalation).

The key bottleneck: the agent was too generous with escalations initially (42% escalation rate). Humans escalated only 18% of cases.

They solved this by showing the agent examples of cases that humans had marked “could escalate but didn’t” and the reasoning. This reduced escalation to 19% while maintaining 96% accuracy on routing decisions.

By week 8, all four Phase 2 processes were live in production with human oversight.

Phase 3: Optimization and Full Production Deployment (Weeks 9-12)

With five processes running live, Phase 3 shifted from launch to optimization and scaling the oversight model.

The team had deployed agents in “high-touch review” mode: 100% of decisions routed to human review before final execution. This proved the automation worked but created a bottleneck—human staff still reviewed everything, so labor savings were minimal.

Week 9 introduced confidence-based routing. Decisions scoring 95%+ confidence on routine cases automatically executed without human review.

Decisions 87-94% went to rapid human spot-check (30 seconds per decision). Decisions below 87% or flagged as edge cases went to full expert review.

This three-tier system meant that 62% of account verification decisions now executed fully automated. Customer service routing reached 58% auto-execution.

Loan intake stayed at 31% (more complex cases required human judgment).

Week 10 focused on feedback loops. Every human-reviewed decision that overrode the agent was logged with reasoning.

They compiled 400+ cases where humans disagreed with agent decisions and fed this back into the training data.

This revealed a critical insight: the agent was making correct decisions about 87% of the time when it was “wrong,” but humans used institutional knowledge the agent lacked. For example, the agent flagged a loan application as high-risk because the applicant’s industry was “manufacturing,” and the risk model marked manufacturing as volatile.

But humans knew this specific applicant was in precision machining, which had lower default rates than general manufacturing.

They built a custom industry taxonomy layer that parsed applicant data at finer granularity. This increased loan intake accuracy by 4 percentage points in week 11.

By week 12, the agent system was handling 67% of all operational work without human review. That 67% covered 2,847 labor hours per quarter previously handled by staff.

The final piece: integrating agents with their existing systems. They connected the agent orchestration layer to Salesforce, Workday, and their custom loan management system using API chains.

When an agent completed a loan intake decision, it automatically created the record in the loan system and triggered the next workflow step—no manual data entry required.

Week 12 also involved capacity planning. With 67% automation achieved, they had removed the need for ~47 FTEs of operations staff.

Rather than immediate layoffs, the company moved those staff into higher-value work: complex exception handling, process improvement projects, customer relationship roles, and system optimization. Only 8 people were affected by natural attrition.

The rest were retrained and repositioned.

The Results

After 90 days, the metrics were unambiguous.

Automation Volume

- Account verification: 12% → 89% (automated decisions without human review)

- Loan document intake: 0% → 64% automation

- Customer service routing: 8% → 71% automation

- Policy exceptions: 0% → 52% automation

- Payable reconciliation: 5% → 58% automation

- Overall operations: 12% → 67%

This is the headline metric and what got board approval for Phase 2 expansion.

Labor Impact

- Hours freed per quarter: 2,847 hours (equivalent to 1.4 FTEs per process)

- Gross labor cost savings (annualized): $412,000 (2,847 hours × $72/hour blended cost)

- FTE reduction: 0 (staff retrained, not displaced)

- Productivity gain per remaining staff member: +34% (same staff focused on complex cases only)

Quality Metrics

- Decision accuracy (agent vs. human gold standard): 93.4% across all processes

- Escalation rate: 18.2% (down from initial 32% during pilot phase)

- Customer satisfaction on automated decisions: 4.7/5.0 (vs. 4.8/5.0 on human-handled cases)

- Compliance violations from agent decisions: 0 (zero regulatory issues in 90 days, vs. historical rate of 0.3%)

The quality metrics were critical because finance is a regulated industry. Any automation that introduced compliance risk would be rejected regardless of efficiency gains.

The 93.4% accuracy and zero violations gave leadership confidence to proceed with Phase 2 expansion.

Speed Improvements

- Account verification turnaround: 2.3 days → 4 hours (automated tier)

- Loan document intake: 1.8 days → 6 hours (automated tier)

- Customer service routing: Same-day processing improved from 47% to 94% of cases

- Policy exception response: 3.5 days → 18 hours average

Speed improvements drove a secondary effect: customer retention improved 2.1% because response times dropped. This added an estimated $340,000 in retained revenue during the quarter.

[Chart: 90-Day Results Breakdown]

Cost Structure

- Implementation cost (90 days): $127,000 (engineering, data science, process documentation)

- Ongoing API and infrastructure costs: $18,400/month (Claude API, Pinecone, orchestration servers)

- Quarterly labor savings: $103,000

- ROI on implementation: 2.8x in year one; payback period 5.2 weeks

- Net annual benefit (savings minus ongoing costs): $220,200

The financial case was so strong that the CFO approved $480,000 in Phase 2 funding before the 90-day pilot officially concluded.

Key Takeaways

- Start with high-volume, low-complexity processes. Account verification was the right first target: 47 steps, clear rules, 5,800+ historical examples, 8-12 minute task duration. Agentic AI wins on volume and routine decisions first. Complex decision-making comes later once the foundations are solid.

- Three-tier confidence routing beats binary (automate vs. human) decisions. Splitting decisions into auto-execute (95%+ confidence), spot-check (87-94%), and expert review (below 87%) meant 62% of cases ran fully automated while complex cases still got human expertise. This hybrid model unlocked 67% automation without the risk of a fully autonomous system.

- Feedback loops compound your results. The first 30 days showed 91% accuracy. By day 90, 93.4% accuracy, 18% escalation rate (down from 32%). The difference wasn’t retraining the model—it was capturing 400+ human override cases and feeding reasoning back into the agent’s decision framework. Each iteration added 0.5-1.2% accuracy because the agent learned institutional knowledge that wasn’t in the raw data.

- Integration beats intelligence. The agent’s decision-making capability mattered less than its ability to automatically execute decisions across five systems. An 88% accurate agent that moves data through Salesforce, Workday, and your loan system autonomously outperforms a 96% accurate agent that requires manual data entry because labor savings come from eliminating handoffs, not perfect decisions.

- Escalation is a feature, not a failure. An agent that escalates 18% of cases to humans isn’t 18% inefficient—it’s 82% efficient on routine work while protecting you against edge cases. This is the opposite of traditional RPA, which either fails catastrophically on edge cases or requires manual intervention on every variant. Agentic systems handle variance gracefully through escalation.

- Retrain staff before you need to reduce headcount. The company eliminated zero FTEs despite 67% automation. Instead, it repositioned 47 people into higher-value work: exception handling, process optimization, customer relations. This created internal advocacy for phase 2 expansion because staff saw their roles evolve, not disappear. It’s also faster to ramp new agentic processes because your operational team understands them.

How to Apply This to Your Site

Whether you’re in operations, customer service, finance, or any knowledge work domain, the framework from this case study translates directly.

Step 1: Audit Your Current Processes for Agent-Ready Characteristics

Map your 10-20 highest-volume, recurring processes. For each, document: process length (number of steps), volume per week, current handling time, historical decision examples, and whether the process has clear rules or high judgment variance.

The ideal agentic AI candidate process has:

- 100+ cases per week (volume to justify automation)

- 20-60 steps (complex enough to have real labor cost, simple enough to model)

- 5+ years of historical data (needed for pattern learning)

- Clear documented rules (starting point for agent constraints)

- Low judgment variance (decisions can be validated against consistent standards)

Run a 2-week audit. You’ll likely find 3-5 processes that hit 4+ of these criteria.

Those are your Phase 1 candidates. <span style=”INTERNAL: workflow automation case studies”

Step 2: Build a Pilot with High-Confidence, Narrow Scope

Pick one process from Step 1. Commit 4 weeks and a team of 2 (one engineer, one domain expert).

Your goal is not to automate 100% of the process—it’s to prove the agent can reach 90%+ accuracy on non-edge-case scenarios and escalate edge cases appropriately.

Use a hosted LLM API (Claude, GPT-4, or open-source alternatives like Mistral) rather than building a custom model. Define escalation thresholds explicitly: below what confidence level does this decision go to human review?

Start conservative (95%+ for auto-execution, rest to review). You can adjust down after validation.

Load your historical process data into a vector database (Pinecone, Weaviate, or Qdrant are all solid choices). This gives the agent retrieval-augmented generation capability—it can look up similar past cases during decision-making.

Run against 200+ historical cases before going live. Validate accuracy against what humans approved/rejected historically.

If you hit 88%+ accuracy on the test set, you’re ready for limited production deployment.

Step 3: Deploy with Three-Tier Routing, Not Binary Decisions

Don’t launch with “agent decides, human rubber-stamps.” Implement confidence-based routing:

- Tier 1 (95%+ confidence on routine decisions): Execute automatically, no review

- Tier 2 (87-94% confidence or edge case flags): 30-second human spot-check, can approve or refer to expert

- Tier 3 (below 87% or complexity flags): Full expert review, agent provides reasoning for human evaluation

Tier 2 is the innovation layer that unlocks real labor savings. A 30-second spot-check costs $0.25 in labor.

But it filters out the 15-20% of cases where the agent is uncertain. This lets you run Tier 1 at scale without the risk of autonomous failures.

Step 4: Capture Feedback and Iterate Monthly

Every human decision that contradicts the agent is a learning opportunity. Log it: what did the agent decide, what did the human decide, why did they disagree?

Monthly, compile the top 20 disagreement patterns. For each, update your agent’s constraints, your retrieval data, or your confidence thresholds.

Often, you’ll discover implicit institutional knowledge (like “precision manufacturing is lower risk than general manufacturing”) that the raw data doesn’t surface.

This feedback loop is the difference between 91% and 93.4% accuracy in the case study. Each iteration compounds because the agent learns from real human expertise, not just statistics.

Step 5: Integrate Agents into Your Systems, Not Just Your Process

The labor savings don’t come from the agent’s decision—they come from the agent executing that decision across your systems automatically.

Build API integration so the agent can:

- Write records to your CRM, ERP, or loan system

- Trigger downstream workflow steps

- Update customer records automatically

- Send notifications and communications on its own

An agent that makes a perfect decision but requires manual entry into three systems will save you 20% labor. An agent that makes a 90% accurate decision but moves data through five systems autonomously will save you 60%.

This is why the case study company focused on API integration in Phase 3. The decision intelligence was already strong by week 8.

Weeks 9-12 were about removing handoffs.

What Happened Next

By Q2 2026 (the quarter after this case study closed), the company launched Phase 2 expansion, adding 12 new processes to agentic automation. They’re targeting 82% overall automation by end of year.

The implementation team grew from 2 people to 6, but the time to deploy new processes dropped from 4 weeks to 10 days as the framework matured.

They also shifted their hiring strategy: instead of hiring 20 operations staff per 15% revenue growth, they now hire 4 staff per 15% growth (for high-judgment work) and invest the labor budget savings into the agent platform.

The CFO’s ROI target was 2x in year one. They’re tracking to 4.2x by year-end 2026.

This is the rise of agentic AI in practice: not a sci-fi future where machines replace humans entirely, but a more prosaic near-term where machines handle routine volume work while humans focus on exceptions, optimization, and relationships.